The Sui Developer Stack: Powering the Agentic Web

AI and crypto aren’t two separate revolutions happening in parallel; they’re two halves of the same transformation, and they’re about to collide. This collision will create the biggest platform shift since mobile, and whoever provides the right infrastructure for this shift will become the foundation layer of the autonomous internet.

Fair warning: 15-minute read. Grab a coffee!

BTW: Here’s the link to my Substack to stay posted:

The Irreversible Curve

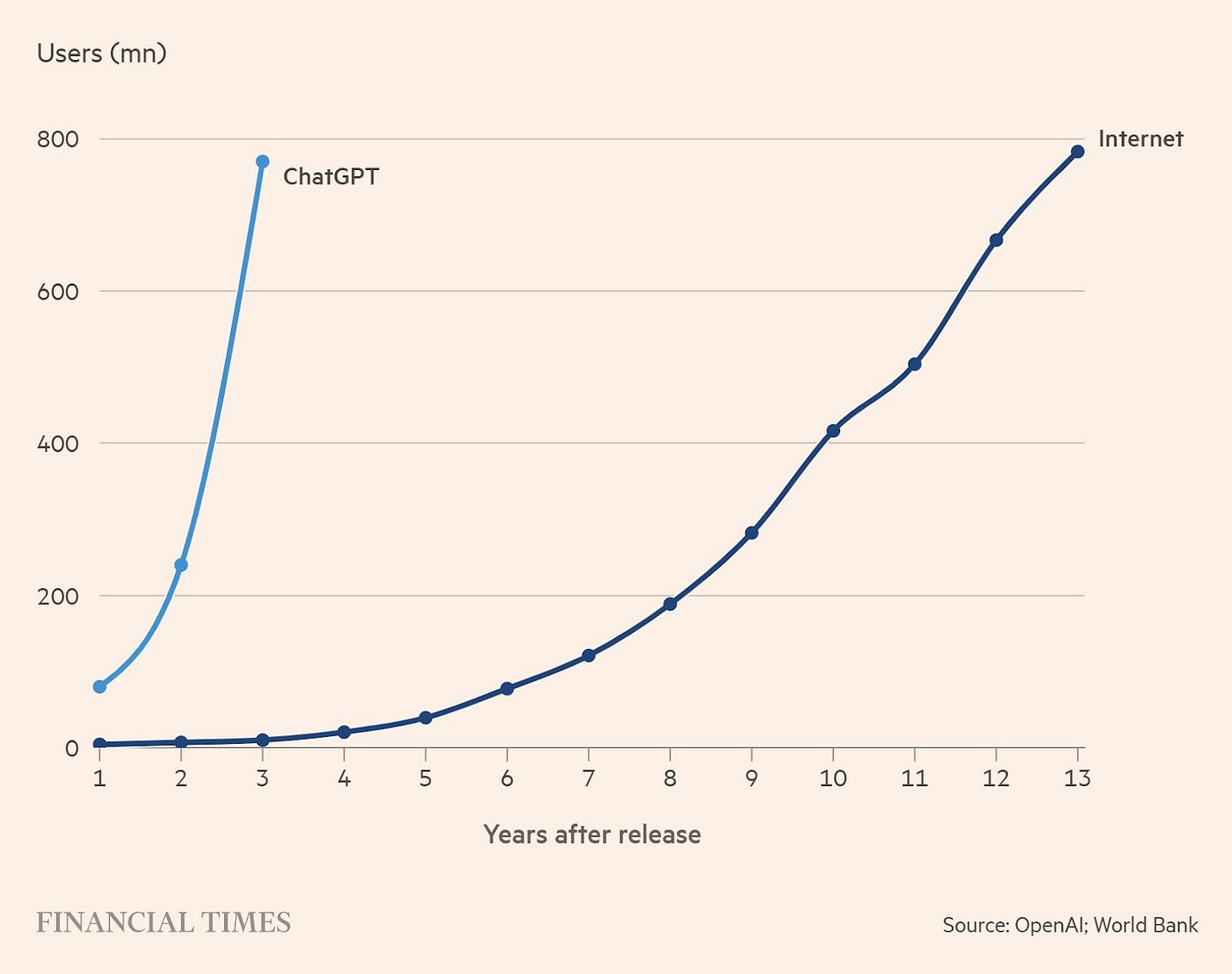

AI is on an irreversible adoption curve, a secular trend of technological progress that is accelerating and not looking back.

Across industries, AI uptake is growing at an unprecedented pace.

For example, the U.S. Census Bureau reported that the percentage of companies using AI rose from 5.7% at the end of 2024 to 9.2% by mid-2025.

To put that in perspective, it means AI adoption is set to cross the critical 10% threshold in just a couple of years.

This is a milestone that took earlier technologies (like e-commerce) decades to reach. And some sectors already see even 25–30% adoption.

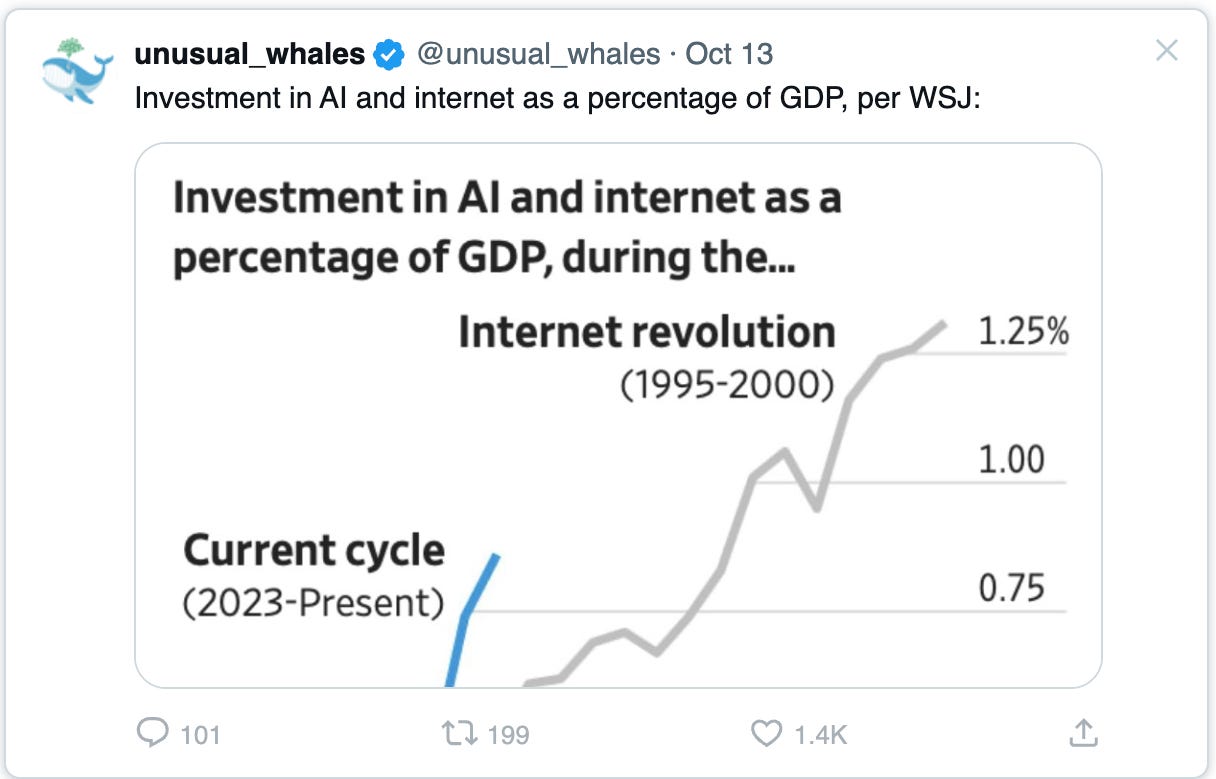

Global investment reflects this trend as well, with AI capital expenditures expected to grow another 33% next year to about $480 billion (after a 60% jump this year)

The signal here isn’t the number; it’s the direction. Capital is moving from demos to deployment, from concepts to real systems.

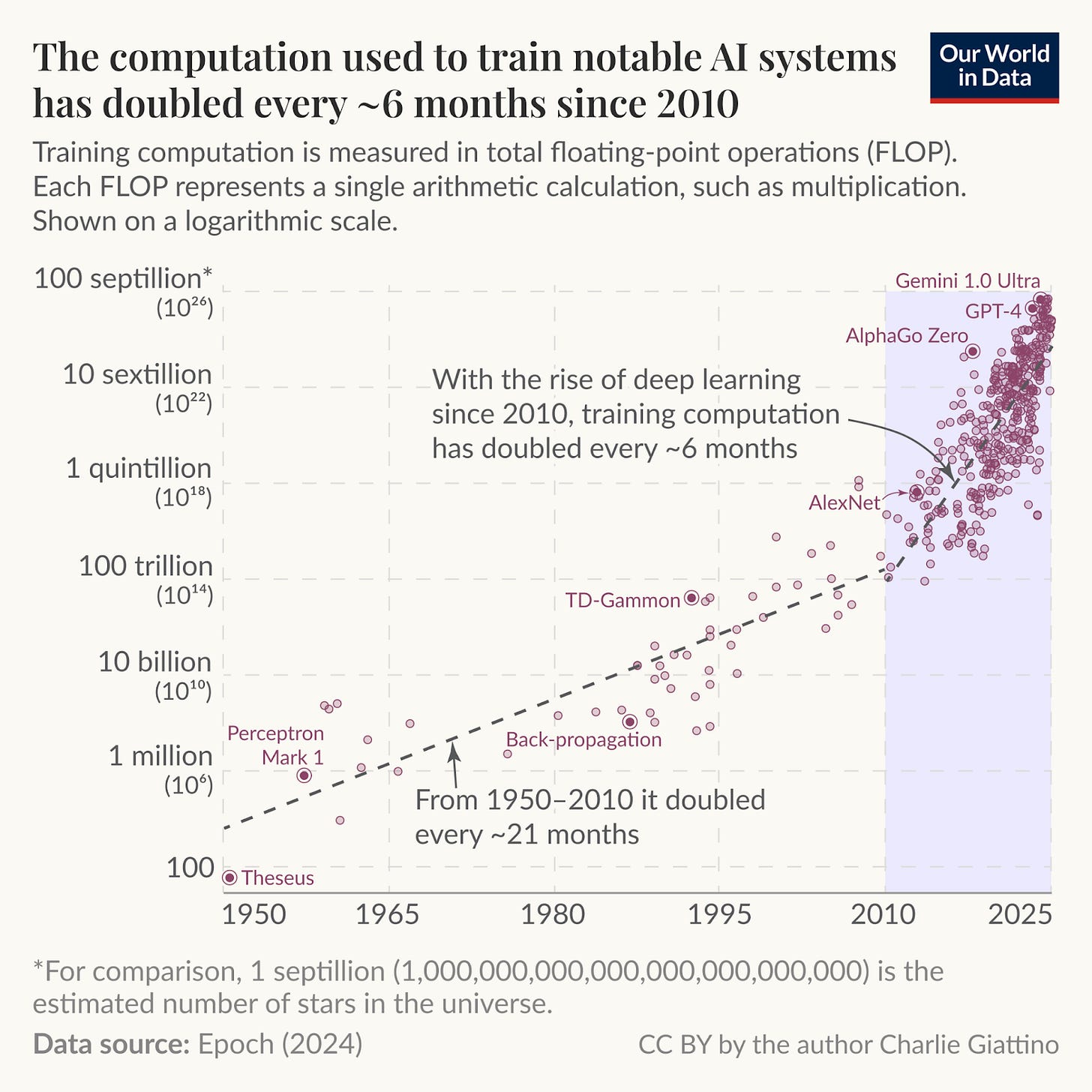

On the capability side, the curve is steeper than most technology cycles.

Since 2010, the compute used to train notable models has doubled about every six months, driven mostly by spend and only partly by hardware gains.

This is why each model generation is not a gentle step up but a lurch forward in what can be automated.

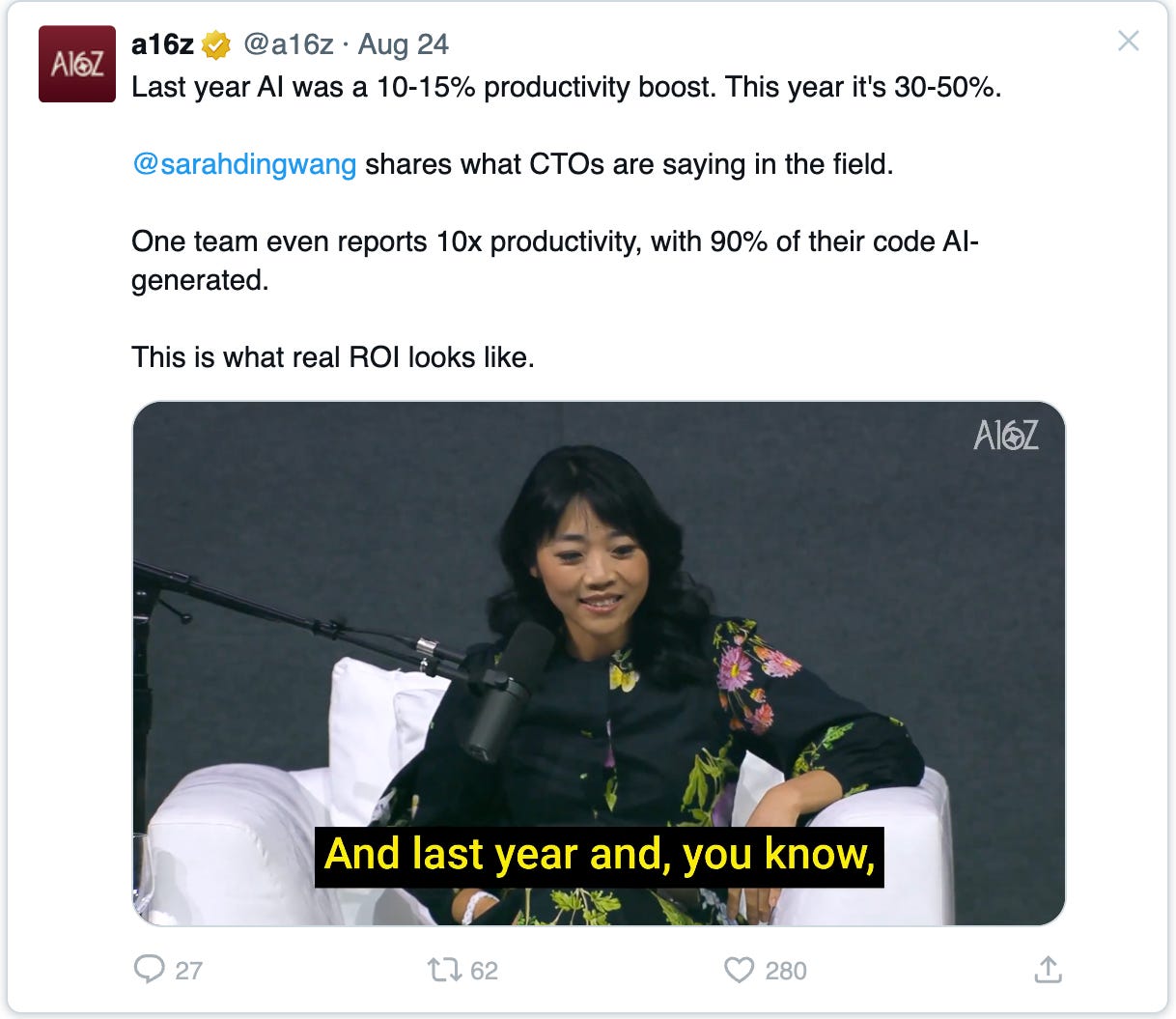

The results are already showing up in the economy.

Industries leaning heavily on AI have seen labor-productivity growth of 4.3% since 2018, compared to just 0.9% in sectors that haven’t.

That spread is the early footprint of a technology that is migrating from experiments to workflows.

These aren’t temporary bursts; they’re compounding signals of an irreversible shift.

The next logical participant in this economy is the agent: a software actor that can plan, call tools, exchange value, and coordinate with other actors.

Why AI Agents Are the Next Logical Step

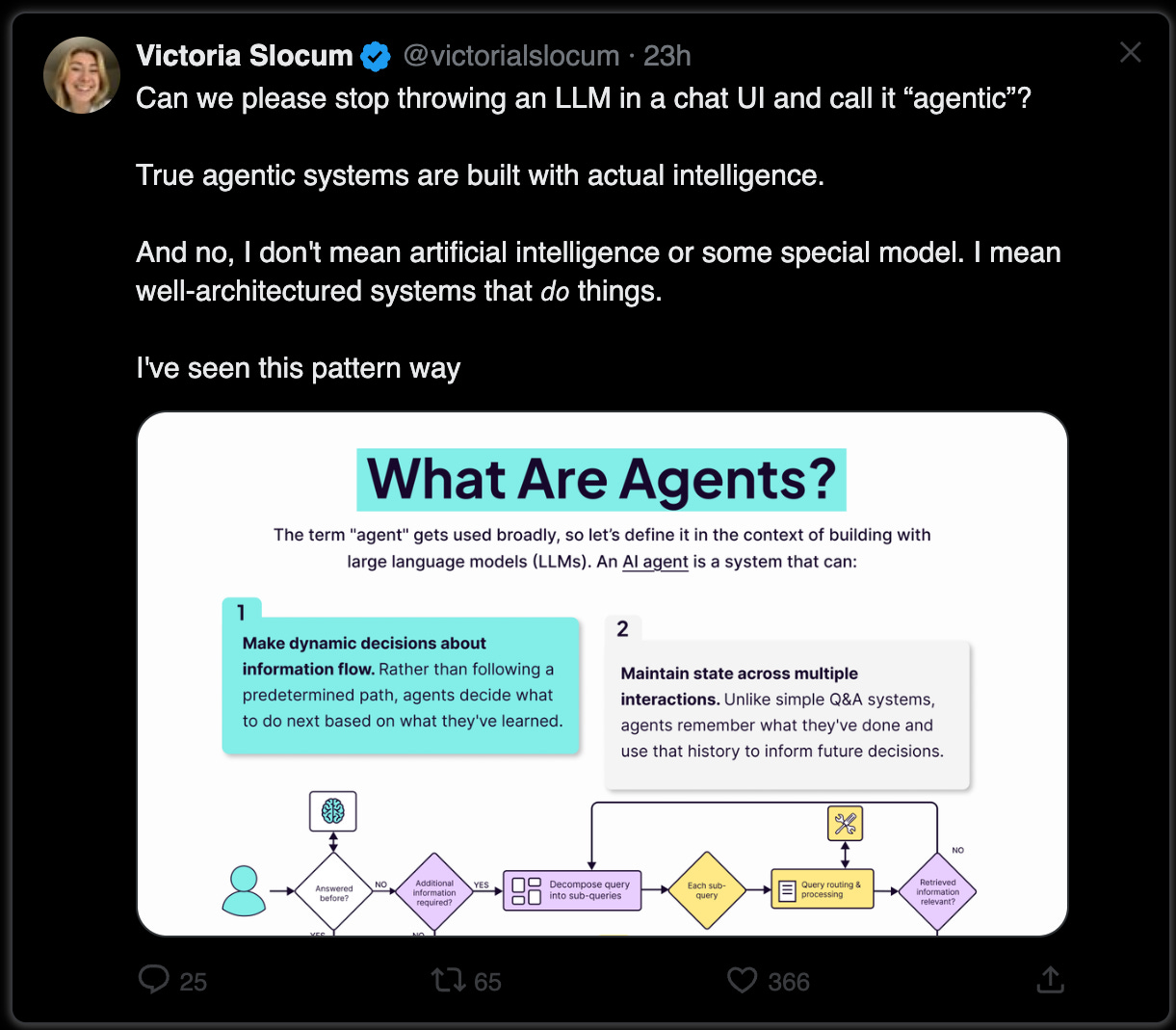

If large language models and generative AI were step one, giving us AI that can understand and create, then autonomous AI agents are the obvious next step: AI that can act.

We’re moving from using AI for knowledge (like a chatbot answering a question) to using AI for action (an agent executing a complex task).

In practice, that means instead of just responding to queries, an AI agent can plan and carry out multi-step workflows on behalf of a user or organization.

For example, rather than just suggesting a travel itinerary, an agent could actually book your flights, hotel, and reservations across various platforms in one go.

Agents will combine the flexibility of AI with the decisiveness of software.

An agent will have “agency” in the literal sense; it can make decisions, take actions, and adapt to new information without needing constant human oversight.

They’ll be able to autonomously perform tasks by designing their own workflows and using available tools, with the versatility and flexibility of AI and the precision of traditional programming.

Instead of following a rigid if/then script, the agent will reason its way through a task, consult data or humans as needed, and learn from feedback.

But making that leap from idea to execution requires more than smarter models; it requires rethinking the web services, APIs, and trust layers these agents depend on.

For AI to act autonomously, it needs an environment where it can do things safely and reliably.

Which is why this shift is landing as a new ownership and trust fabric matures, the perfect counterpart to an agentic layer that is ready to act.

Intersection With Crypto and Digital Assets

It’s easy to think of AI and crypto as two separate revolutions, the first making software intelligent, the second making ownership programmable.

But when you look closer, their trajectories are converging toward the same endgame. Both are rewiring the internet around autonomy.

AI agents represent autonomous decision-making; blockchains represent autonomous trust. One gives machines the ability to act, the other gives their actions a shared reality.

Together, they form the two halves of a new coordination fabric: intelligence that can decide, and infrastructure that can verify.

For agents to function safely in the real world, they need more than just better LLM models; they need trust, authority, and context that travels with them.

Who does this agent represent? What can it spend? Which data can it use or reveal? Today, those answers live in app servers and legal contracts.

Tomorrow, they’ll live in programmable state: verifiable, auditable, and enforced by code.

That’s where blockchain becomes not just compatible with AI, but essential to it.

It provides the shared memory where agents can coordinate across organizations without trust, and where every action carries an accountable proof of origin.

Every plan an agent makes, every resource it touches, every exchange it initiates can be traced to cryptographic truth instead of platform promises.

And once that happens, you no longer have isolated AIs or isolated ledgers. You have a new layer of the internet forming.

What’s emerging will be an internet that doesn’t just host data or communication. It will be an active environment where autonomous agents can transact, reason, and build on behalf of users.

Why We Aren’t There Yet

If AI agents are so promising, why hasn’t the agentic web already arrived?

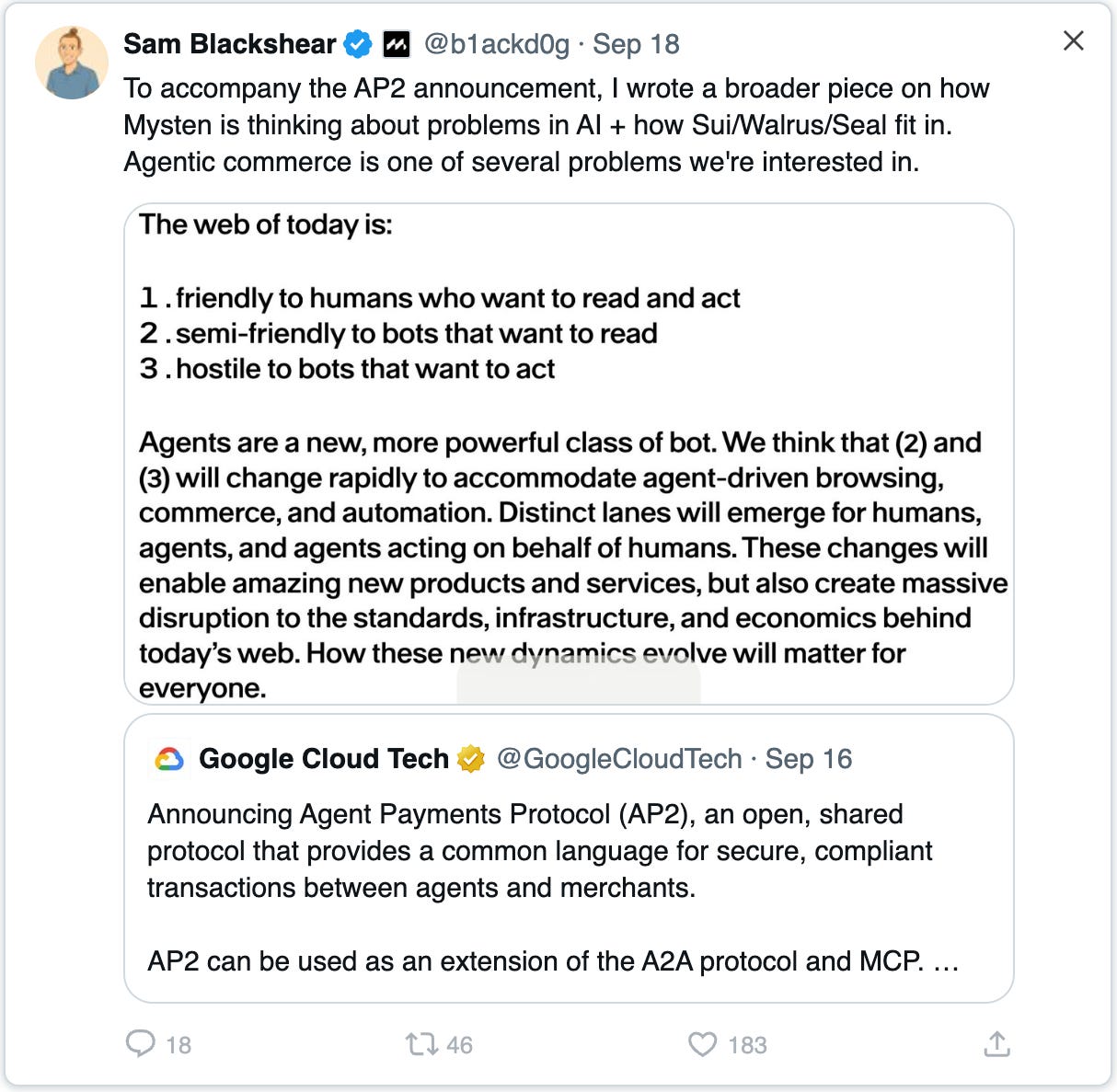

The short answer is simple: the web we have today was built for people, not AI.

It assumes that humans, with browsers and apps, are the main actors, and automation is an afterthought.

That’s why much of the internet is actually hostile to autonomous bots.

Think about it: most sites hide their data behind logins, CAPTCHAs, and rate limits. APIs demand developer keys and impose strict quotas.

Those safeguards make sense for human-driven systems, but they also mean an honest AI agent can’t roam freely or transact like a person can.

As @b1ackd0g puts it, the web is “friendly” to humans, semi-friendly to bots that just read data, but “hostile to bots that want to act.”

The Problem Isn’t Our AI; It’s the Infrastructure

Web 2.0 was optimized for human sessions with a little automation sprinkled in, not for fully autonomous processes.

Every website became a silo with its own logins, permissions, and human-facing interface.

There’s no universal identity or reputation system for bots, no standard way for an agent to prove “I’m acting for Alice, and I’m allowed to do this.”

That’s why today’s automation feels brittle, with all those scraping scripts and one-off integrations that break when a site changes.

Right now, the web still assumes a human is in the loop to fix what breaks.

If a page doesn’t load, we refresh. If a payment fails, we retry. People can tolerate ambiguity; machines can’t.

An AI agent doesn’t know how to “jiggle the handle” when something goes wrong; it expects clear, verifiable outcomes.

The trust model of the current web (passwords, emails, legal terms) is built for human judgment calls, not autonomous execution.

Moreover, the speed at which agents operate exacerbates the gap.

Autonomous agents could perform thousands of actions per second across different services; far beyond human pace.

Any manual oversight or inconsistent latency between systems becomes a scaling problem.

The current web was never intended for synchronous, high-frequency, cross-platform coordination without a human hand on the wheel.

We are essentially trying to drive race cars on roads built for horse-and-buggy traffic.

Even Data Itself Isn’t Built for This World

Information lives in silos with access policies hard-coded in app logic. Once a file leaves one system, its usage policy doesn’t travel with it.

Provenance is logged but not portable. Integrity depends on centralized attestations, not cryptographic proofs.

Agents can fetch, but they can’t verify or enforce.

As a result, the moment an agent needs to operate across multiple trust boundaries, accessing data, executing transactions, and reconciling results, the system fragments.

All of this adds up to the same core limitation: today’s internet is stateless at the seams.

It can pass messages, but not shared truth. It can route intent, but not guarantee coordinated settlement.

The Agentic Web vision flips that. It imagines an internet where machine agents are first-class citizens alongside humans.

To get there, everything from authentication to data access, transactions, and error handling needs to work at machine speed and with machine-level certainty.

For agents to truly do business on their own, they’ll need a new kind of trust fabric.

One based on cryptographic proof and atomic transactions, not just good faith and error messages.

The Next Evolution of the Internet

The internet will need to evolve from a network of data and communication into a living system where autonomous agents can reason, transact, and build on our behalf.

Their actions will have to be governed by verifiable ownership and transparent rules.

That will mark the shift from a read–write web to a read–write–act web, one that won’t wait for human input to trigger value exchange.

In that paradigm, automation and ownership must no longer live on separate layers. They will need to become two sides of the same architecture.

When those two properties converge, the internet will become not only intelligent but trustworthy by design.

This future version of the web shouldn’t feel like a patchwork of apps and APIs.

It should feel like one composable substrate where humans, agents, and organizations share the same digital fabric.

It will need to become a living system where data can prove its source, identities can express intent without revealing identity, and every transaction leaves behind a cryptographic signature of truth.

Trust will have to shift from being enforced by institutions to being enforced by infrastructure.

The goal is a world where collaboration is native, not negotiated; where ownership is intrinsic, not implied; and where automation operates within boundaries that users control.

But realizing that vision will require more than intelligence and ambition. It will demand rails that can host these interactions safely and verifiably.

The Technical Blueprint for the Next Era

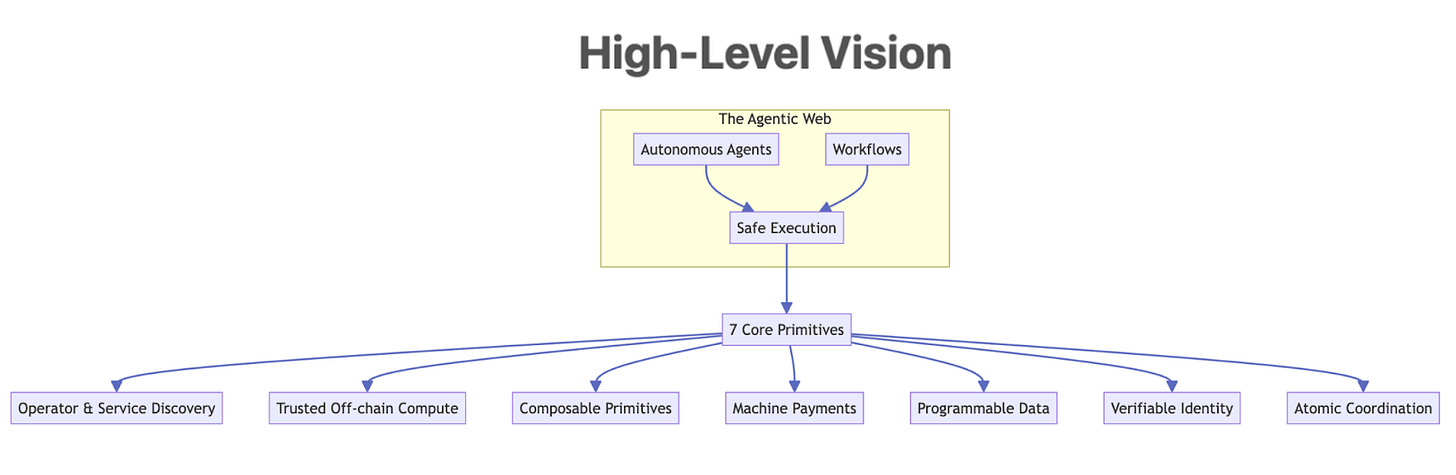

What exactly does the internet need, technically, to host autonomous agents and workflows safely?

Based on the challenges and vision outlined, we can distill a set of first-class primitives that the base layer should provide.

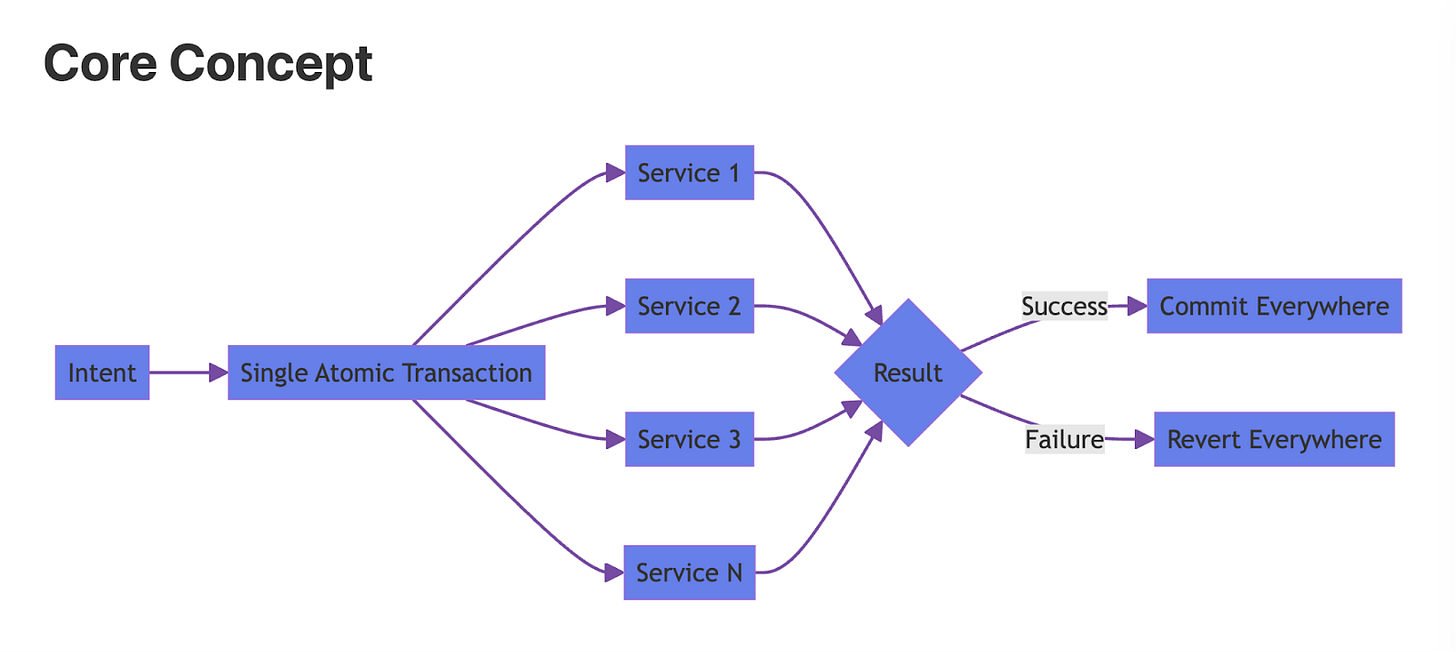

1. Atomic Coordination Layer

At machine speed, you can’t rely on manual reconciliation; you need transactions that encompass entire workflows across multiple services.

In the new paradigm, one intent (like an agent’s high-level goal) should compile down to a single, atomic transaction that either commits everywhere or reverts everywhere.

This requires an execution environment that can coordinate calls to multiple contracts or services in one go.

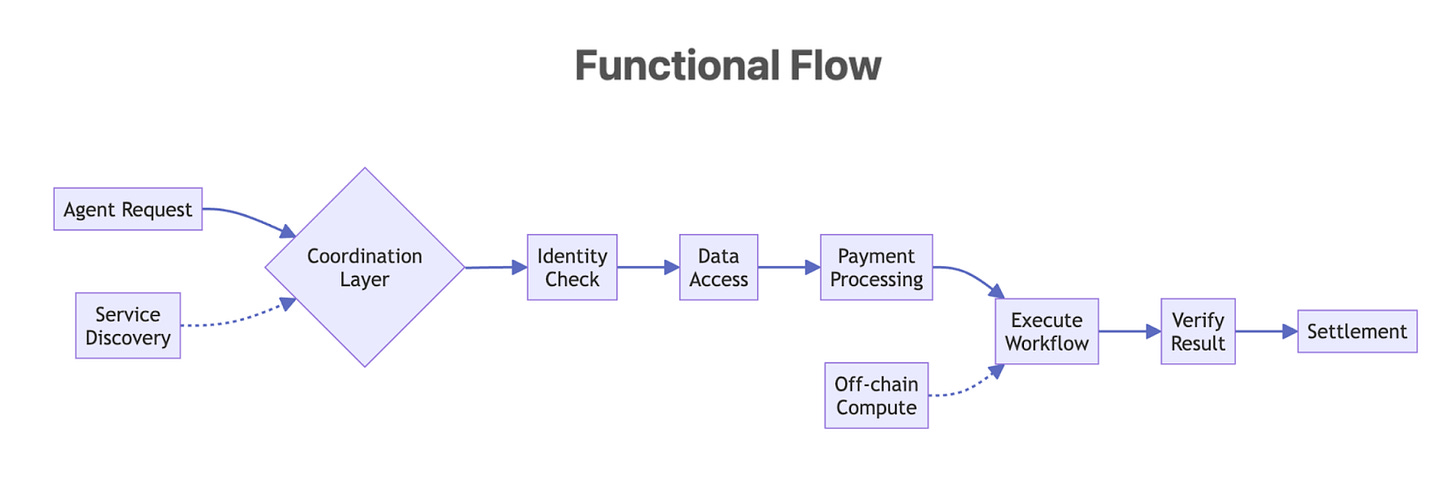

2. Verifiable identity and scoped delegation:

In an agentic web, we’ll have humans, human-owned agents, and perhaps independent agents all interacting.

We need a robust identity layer so that an agent can prove who it’s working for (or that it has a certain role or permission), and so that interactions can be authenticated end-to-end.

This goes beyond simple public keys.

3. Programmable data with portable policy:

As noted, data needs to carry its policies and proof of integrity wherever it goes.

This calls for content-addressed storage and on-chain data references.

Content-addressed storage means files or blobs are referenced by a hash (so anyone can verify they got the exact content) and typically stored across decentralized nodes.

4. Machine-grade payments and licensing:

Today’s payment systems (credit cards, bank ACH, etc.) are too slow and manual for agent automation.

We need on-chain payment rails that are fast, stable, and programmable, so that agents can settle value as quickly and reliably as they exchange data.

This means native support for stablecoins or digital currency that agents can use 24/7 globally.

It also means transaction formats that can handle complex payment logic: things like refunds, escrow, revenue splits, royalties, subscription payments, all executed automatically per the transaction’s outcome.

5. Trusted off-chain compute:

Not everything can run on-chain for performance or cost reasons, especially heavy AI computations or data processing. Yet, we can’t just do things off-chain and lose trust.

The solution is trusted off-chain compute: being able to run code off the blockchain with guarantees about what happened.

6. Composable primitives and interfaces:

To accelerate development and interoperability in this new ecosystem, we can’t have every project reinvent the wheel.

We need a standard toolbox of primitives; kind of like the POSIX standard in operating systems , but for the agentic web.

7. Operator and service discovery:

A marketplace where developers can discover storage, key services, and other operators, pay programmatically, and plug in with consistent observability and versioning.

These are not luxuries. They are the minimum spec for a real agentic web.

How We’re Is Building Toward That Vision

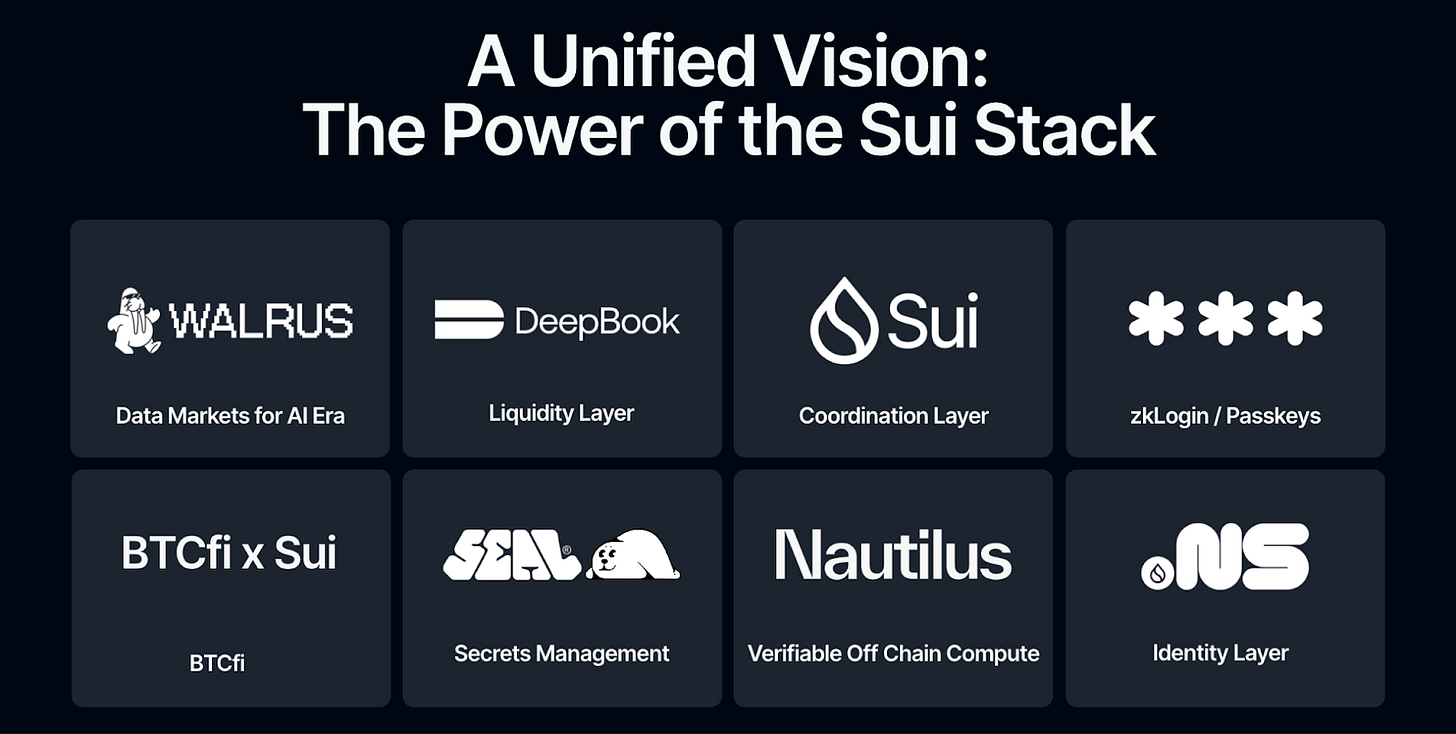

The Sui stack already includes the core ingredients for this blueprint.

Sui provides the coordination surface where intent can become an atomic outcome.

Walrus gives content‑addressed storage for large artifacts that agents can reference and reuse.

SEAL brings encryption and policy so data access is scoped and auditable.

zkLogin and Passkey anchor identity and delegation so humans and their agents can act with bounded authority.

DeepBook supplies liquidity rails that fit inside programmable flows.

Nautilus adds trusted compute when heavy lifting must happen off chain without breaking trust.

SuiNS and Walrus Sites round out naming and web hosting so applications have stable entry points and distribution.

Taken together, these pieces map directly to what autonomy needs in practice: coordination, identity, data, payments, compute, and a way to discover and ship.

The work now is to turn a capable stack into a single, composable surface that any developer can use.

Let me explain why!

The Integration Wall

The truth is, what we’ve built so far is enough to steer excitement in the Web3 space.

Each part of the stack, from coordination to storage, encryption, identity, and liquidity, solves a real problem.

But there is a difference between having the right parts and having a product people can use. Each service has its own SDK, its own CLI, its own documentation style, its own release cadence.

A new integration may not feel like adding capability; but may feel like adding cost.

What should have been a network of composable systems still behaves like a set of independent tools stitched together with developer patience.

As complexity compounds, momentum slows. This isn’t a failure of vision but of abstraction.

The challenge now is not inventing new primitives, it’s harmonizing the ones that already exist.

We need to make the act of building on this infrastructure feel less like stitching protocols and more like writing software. That’s how we move from a tech stack to a developer platform, from protocols to a product experience.

And that’s where the real work begins: building for the next generation of developers who will never think of themselves as “Web3 builders” at all, yet will depend on these systems every day.

Building for the Next Generation of Developers

So here’s the truth: Your average web2 and AI developers don’t care about block heights or consensus types; they care that their apps scale and that systems behave predictably under load.

To persuade these builders to use the components we have built, the infrastructure must feel familiar.

The cryptography should fade into the background, and what’s left should feel like software, not ceremony.

This shift in audience changes everything. It’s not just a design problem, it’s a product philosophy.

Building for web2 and AI developers means leading with DevEx, not ideology.

It means abstracting the primitives of verification and ownership into interfaces anyone can use, without asking them to learn a new mental model of the internet.

If the first era of Web3 was about proving what’s possible, this one is about making it practical: reliable rails for people who just want to build things that work and can be trusted by default.

Because ultimately, infrastructure adoption isn’t driven by conviction; it’s driven by convenience.

That’s how you grow beyond an ecosystem and start shaping the substrate of the next internet. But getting there isn’t about adding more components.

It’s about bringing the existing ones into harmony.

Reimagining the Stack as a System

The next phase is not about minting more protocols; it is about coherence.

Coordination, storage, encryption, and liquidity have to behave like parts of one organism that evolves together.

When a developer adds the second and third capability, the system should feel easier, not harder.

That is the benchmark for sublinear integration cost.

Each protocol should reinforce the others, abstracting away its own complexity and exposing a consistent surface for the builder.

Only then can developers think in outcomes rather than integrations, in workflows rather than wiring.

This is how the stack matures into a system.

It should all feel like one substrate, one mental model, one way of building.

And when that happens, something deeper changes.

From Protocol Builders to Platform Builders With DevEx At Its Center

The shift from protocols to platforms isn’t just a technical evolution; it’s a philosophical one.

For years, the focus was on building the primitives: the coordination layer, the storage layer, the encryption layer, the identity rails.

The next leap forward isn’t about perfecting the components; it’s about orchestrating them.

Because in the end, the success of a protocol isn’t measured by how elegant its design is, but by how easily people can build on top of it.

The goal is simple.

Every developer should be able to access blockchain trust and programmability without feeling like they are using one.

We want the infrastructure to become invisible. We want it to feel intuitive, reliable, and deeply aligned with how builders already work.

And once that happens, momentum compounds. More developers mean more applications, more use cases, more composability.

The stack stops being a collection of technologies and starts becoming a gravitational field for innovation.

That’s the inflection point we’re moving toward.

The Next Steps

The next chapter is about focus and refinement.

We have already proven that the primitives work: the rails for coordination, storage, identity, and data policy are live.

The challenge now is to bring those capabilities together into a single, coherent developer experience and scale it beyond the crypto-native world.

That means consolidating the tools, aligning the workflows, and building the kind of platform that developers instinctively reach for when they need reliability, not ideology.

It’s less about launching the next protocol and more about making the entire stack feel seamless, familiar, and ready for mass adoption.

The immediate work ahead is to build the connective tissue.

Each component of the system must not just coexist, but collaborate: to share standards, APIs, and tooling that make integration effortless.

Documentation, SDKs, and marketplaces will be unified around clarity, speed, and accessibility.

Instead of separate touchpoints for each service, there will be one surface for builders. And that unification won’t happen in theory; it will be driven by practice.

This phase is about operational maturity: We’re turning innovation into reliability, complexity into simplicity, and potential into usability.

Our bet is simple.

We are aligning product, tooling, and ecosystem around one goal, to make verifiable systems feel like normal software to build with, and to make coordination at internet scale reliable by default.

Powering the Verifiable Internet In The Agentic Era

We see where the curve is bending.

Software is becoming a network of actors that decide, transact, and collaborate at machine speed. And the winners will be the platforms that turn this shift into something builders can use with confidence.

Sui is being set up to be that default.

In an era where speed and certainty decide market share, we intend to be the platform that product teams reach for first.

Stay updated by subscribing to my Substack:

This article seriously nails the interconnectedness of AI and crypto, it's such a sharp take on where things are headed. As someone deep in AI, seeing that capital shift from demos to actual deployment realy underscores the 'irreversible curve' you talked about, it's wild to witness this transformation firsthand.